You are at the office of some physician waiting for them to describe what course of treatment they are going to recommend for your problem. It is not a life-threatening problem, but it is one that has had a serious impact on your life. It has made you anxious, given you sleepless nights, affected your relationship. It is with a mix of hope and apprehension that you wait for the doctor to tell you what they are planning to do to fix it.

And then they tell you: They are going to do X.

X? You ask. OK, tell me more. Why is it a good choice? What makes you recommend this?

Oh, I did not recommend it they say. The AI model did. Mostly because of your age.

Mostly because of my age? What does that mean? Is it more or less dangerous than another one at my age? More efficient?

What it means is that if you had a slightly lower age, the model would have recommended something else, namely Y.

That scenario might sound completely mad, but if you listen to some developers of applications of AI in health, that is exactly what they are selling. I recently heard someone from a startup explain how, because they can apply perturbations on the input to observe changes in the output (i.e. sensitivity analysis), then their method was explainable. Their application was in health. It would fit the scenario above exactly, except that I doubt it would get that easily accepted by the physician.

Of course you will tell me that this is not the only way to achieve explainability. And you would be right, but are those other methods really that different? What is LIME doing other than figuring out what variables have most influenced the output? What are the other methods doing, other than finding correlations between characteristics of the input and the output of the model? Can they not do better?

I’m afraid they can’t.

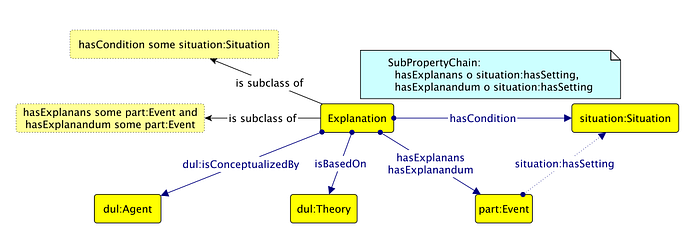

Explanations have to be more than correlations. That’s what has been formalized in medicine, psychology, philosophy and many other disciplines for a very long time. That is what was summarized in a paperfrom a few years ago, born out of the frustration of a researcher working on explanation in computer science, and seeing how disappointing it was. Beyond the co-occurrence of two events (the explanans and the explanandum), those have to be present in the same context and more importantly, they have to be fundamentally connected, through some theory that ground their relation. There has to be something indicating how one can, mechanically, chemically, psychologically, physically, be the cause of the other. That theory is the real explanation. We choose X because we believe, not only from observation but also due to some known phenomenon Y, that it becomes more efficient after a certain age.

Here is therefore our first requirement for what should make an explanation: It has to be more than a correlation. It has to link the explaining component with the explained at some theoretical level. That is also where we have our first problem. It is also reasonable to require for the explanation to actually explain the way the model took a decision. It should explain what the model does. It should explain the underlying logic. This might sound obvious, but when it comes to explaining AIs, it is not. That’s mostly because what they do is actually quite dumb.

If we focus on the machine learning approaches that are not inherently transparent, such as neural networks, then correlations are all they have access to. They find any signal in the data that might show relationships between variables, and exploit them to make decisions. However, the actual nature of those relations does not come into play at all. It is entirely external, and there are many examples to show that if we, with our own knowledge, looked at them closely, we could see how they do not actually reflect a robust way of making decisions. They take shortcuts. They exploit coincidences. They have no foundation. We have all seen examples of spurious correlations like the one below. A neural network cannot make the difference between that and a valid causal link. We can because we know that there is nothing linking those two things, but that knowledge is external. Therefore, trying to use such external knowledge to explain the behavior of the model might help, but it would be contradictory since the model itself does not have access to it. It would not explain the model’s decision. It would not explain its logic. It would just rationalize it from external knowledge.

Another way to take this is more positive: Maybe the goal is therefore to build AIs that are so massive, that include so much information directly or indirectly relevant to the problem, that they would end up internalizing the theories, the phenomena that can explain why there seem to be a relation between some aspects of the input and some aspects of the output. That appears to be, at least partly, the idea behind deep learning, where instead of carefully crafting the features to give the model, we give it more data, more layers, and let it figure it out. But that’s where a third requirement comes to play: Don’t we want our explanation to be understandable? If the vision is to build a vast, ultra complex model, then either the explanation will be themselves ultra complex, or they will be massive simplifications of what the model is really doing (as they are now). We can’t have it both ways: Let the AI learn from billions of data points, based on billions of connections between millions of units, and expect a two sentence explanation of the results that my mother in law could understand. If we can get that, then maybe the problem did not require as much intelligence as we thought? And let’s be clear about it, my mother in law is as much as anybody else the target for the AI, and therefore the audience for the explanation.

Tosummarize, the three requirements considered above appear quite reasonable, especially in the kind of scenarios that was included at the beginning. Those are:

- An explanation has to be more than a correlation.

- An explanation has to reflect the way the model actually comes to a conclusion.

- An explanation has to be understandable by the people affected by the decision made.

Requirements 1 and 2 clash mainly because the AIs that require explanations rely on correlations and would need to access external knowledge to be explainable. Requirements 2 and 3 clash mainly because, to explain the results of very complex models in a way that is understandable, we would have to simplify what is actually happening in the model.

So explainability of AI is just no possible. Should we give up on it then and accept that AI will forever be obscure (and therefore untrustable and unreliable)? Should we altogether give up on that form of AI that requires explanations? Maybe not.

Using AI and using explanations can be a way for us to better understand how decisions should be taken. If knowledge exists and can be found to provide a foundation to explain the results of a model, then maybe we should integrate that knowledge in the model. If it does not exist yet, then maybe we could look for it. Maybe the results are all rubbish and based on coincidences, and maybe they are not. If they are not, there might be something in those results for us to discover. Decision making might not be the primary goal of AI and explainability. It might rather be a tool for us to discover ways to make our own decision stronger, new criteria for making those decisions and new knowledge to ground them.

By Mathieu d’Aquin I’m a developper and a researcher in data science, knowledge engineering and artificial intelligence. I’m a (some would say grumpy) frenchman living in Ireland.